Why Megaprojects Are Execution Nightmares (And Why It’s Predictable)

The failure rate isn’t random. It scales.

A 2mm steel tolerance issue in a mid-sized project? Annoying, but manageable. The same deviation in a high-rise with 10,000 beams? A logistics disaster.

The data makes it clear. Nine out of ten megaprojects exceed budgets, and 61% miss their original schedules. The California High-Speed Rail, initially priced at $33B, is now projected at $113B—an increase of 242%. It’s not because of bad engineering or faulty materials. It’s because execution failures compound as projects scale. A minor misalignment in modeling, repeated across thousands of prefabricated elements, leads to weeks of site adjustments and millions in overruns.

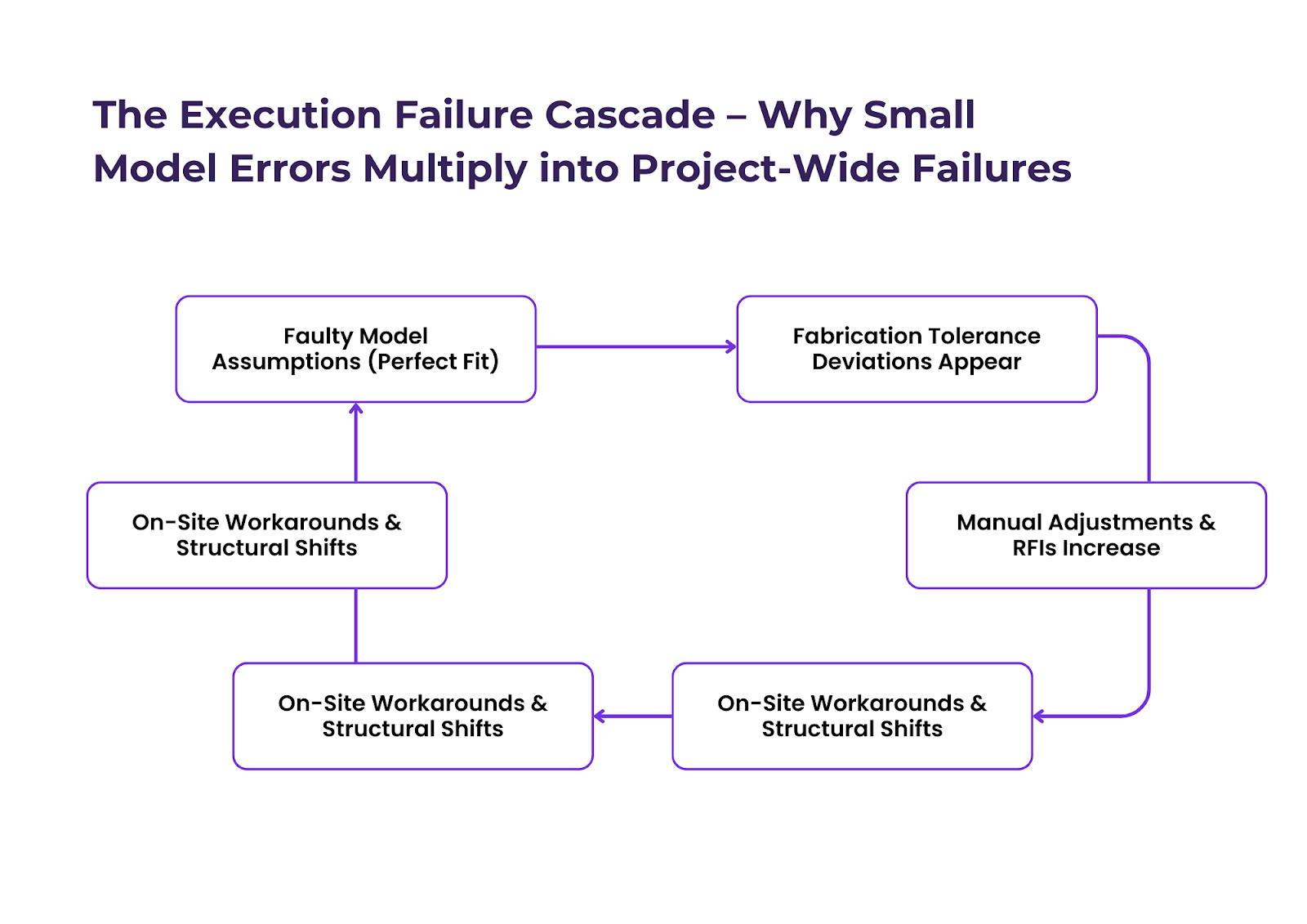

It’s called the Scale Complexity Law—as projects grow, execution failures don’t increase linearly, they multiply exponentially. And yet, most firms still operate with the assumption that they can fix execution issues downstream instead of embedding execution logic into the model itself.

That’s why BIM and digital twins haven’t solved the problem.

Why More Software Hasn’t Reduced Execution Failures

We assumed that BIM adoption would eliminate these issues. Clash detection, AI-driven validation, digital twins—all of it was supposed to reduce rework. But rework costs haven’t gone down.

A model can be “clash-free” and still fail on-site.

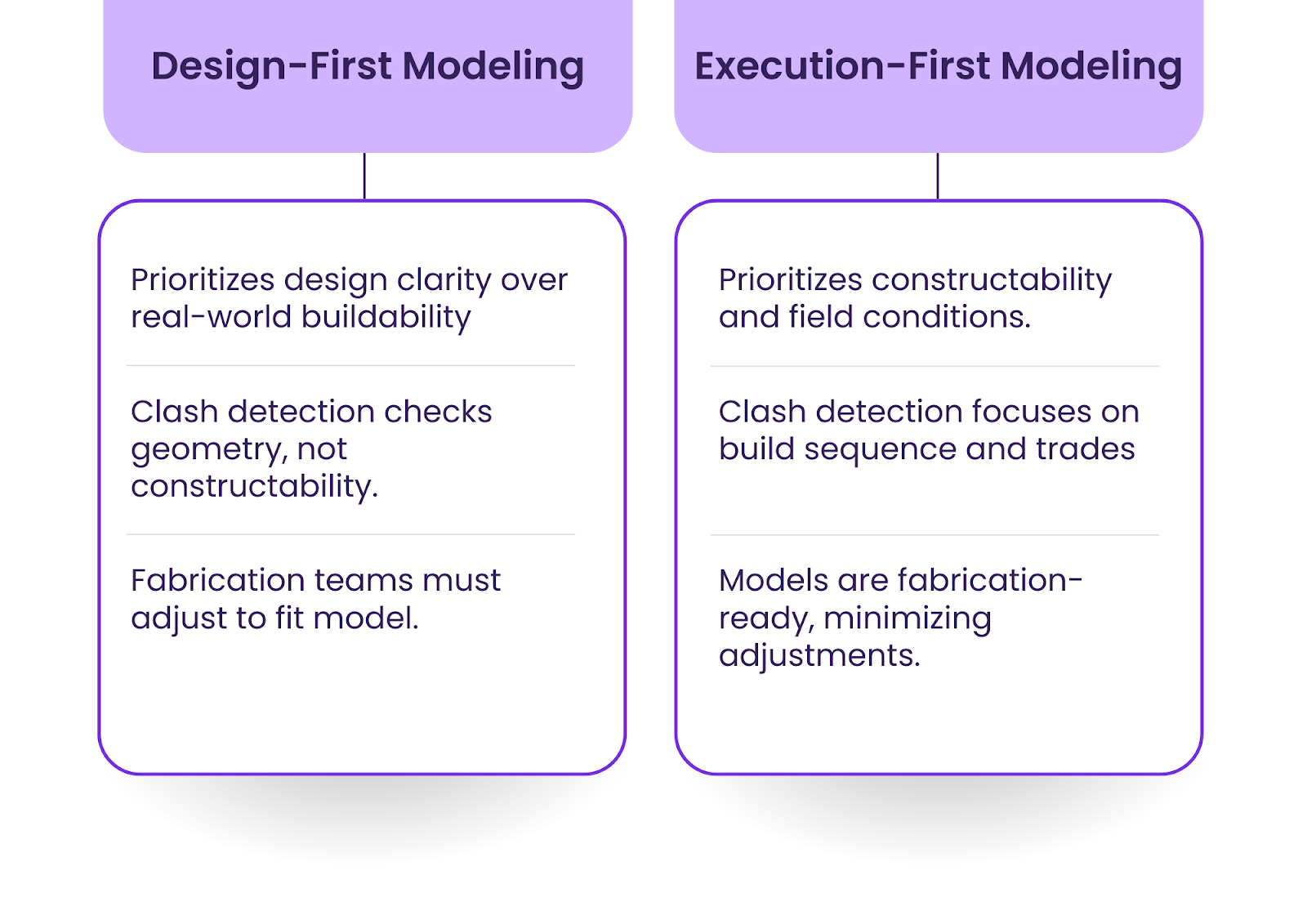

Clash detection only catches geometric conflicts—it doesn’t validate whether a model is actually buildable. It doesn’t account for fabrication tolerances, on-site sequencing constraints, or installation logistics.

And that’s why high-tech firms still face the same failures as low-tech firms. The firms that actually outperform aren’t the ones with the most advanced modeling tools; they’re the ones that model for execution-first.

Instead of assuming a model is correct and fixing errors later, they validate models against real-world tolerances before fabrication begins.

The Fatal Flaw in Architectural Models: Precision Without Real-World Constraints

Digital models assume a level of precision that fabrication doesn’t operate on.

Steel doesn’t arrive perfectly cut to the millimeter. Concrete doesn’t pour at exact volume. A ±2mm steel deviation might seem trivial in a single beam, but when repeated across an entire floor plate, it forces site welding and weeks of adjustments.

Precast concrete is even worse. It has a ±5mm variance, yet models assume perfectly flush connections. That’s why entire façade systems have to be reworked on-site—what was designed to fit theoretically doesn’t fit in reality.

And this is where execution fails before construction even begins.

A perfect digital model is worthless if it isn’t execution-ready.

Why Other Industries Solved This Decades Ago (And Why Construction Hasn’t)

This problem isn’t unique to construction. It’s just that other industries have already solved it.

In aerospace and automotive, execution failures like this don’t happen. Boeing doesn’t design a fuselage and “hope” it aligns in fabrication. Manufacturing constraints—material tolerances, weld stresses, assembly sequences—are embedded into the digital model from day one. That’s why aerospace execution failure rates are below 1%, while construction rework exceeds $100B annually.

The difference? Other industries model for execution.

Architecture still models for documentation.

That’s the gap.

The Only Fix: Execution-First Modeling

We can’t keep assuming that highly detailed models will “just work” in fabrication. Execution-first modeling means building models that reflect real-world constraints, not just design intent.

It looks different in three key ways:

First, fabrication constraints must be embedded into design models from the start.

- Instead of assuming steel connections will align perfectly, models must account for cumulative tolerance drift.

- Instead of assuming precast elements fit without adjustments, models must factor in on-site installation variability.

Second, AI-driven execution validation must replace traditional clash detection.

- Standard clash detection identifies geometry issues but does not predict fabrication misalignments.

- New AI tools must simulate real-world buildability failures before models are signed off.

Third, models must be dynamically updated during fabrication.

- A static model guarantees execution failure.

- Leading firms already integrate live fabrication data into their models, ensuring that what’s being built in real-time aligns with what was designed.

(If execution-first modeling is something your firm is looking to implement, our latest guide outlines exactly how leading firms are eliminating these failures.)